Building Smarter Custom GPTs: The Rise of the API Test Navigator Framework

The Rise of API-Powered GPTs

In 2025, generative AI systems have evolved from standalone conversational tools into integrated data interfaces. Developers and organizations are now building custom GPTs that connect to live APIs—fetching market data, weather reports, best-seller lists, or internal business metrics—through natural language.

These API-powered GPTs blur the line between chatbots and intelligent data services. They can query, analyze, and summarize live information from multiple sources with a single user request.

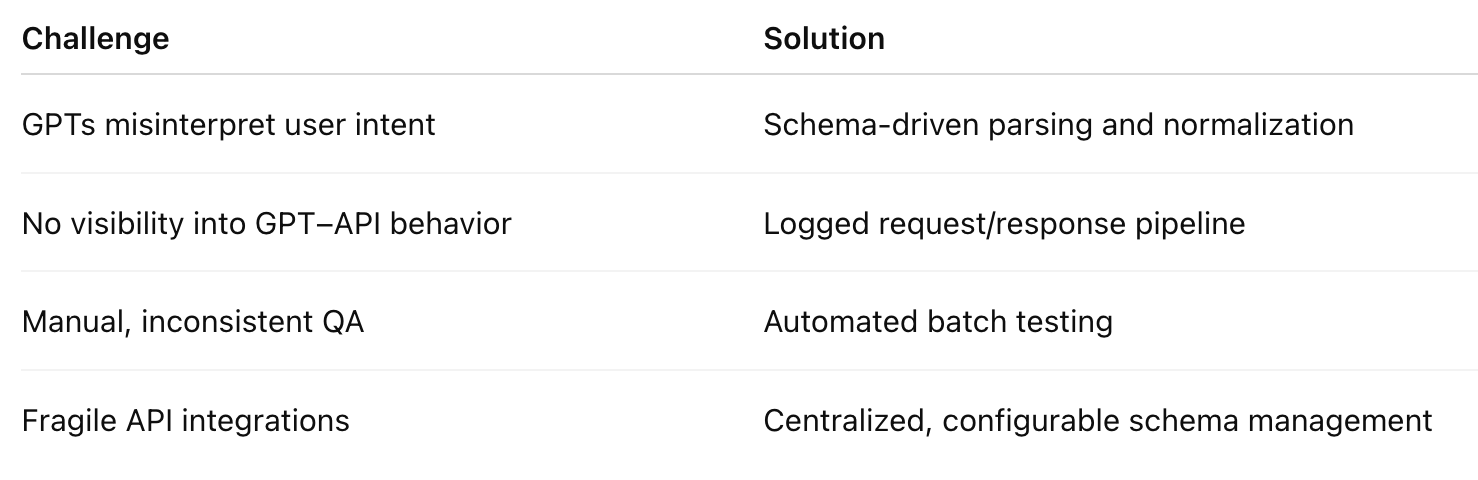

However, this new paradigm brings a critical problem: ensuring that GPTs interpret human language accurately and translate it into valid API requests.

The Problem: "Prompt to API" Is the New Black Box

When a user says, "Show me the top hardcover fiction best sellers this week", the GPT must decide:

Which API endpoint to use

How to interpret “this week” (as a date)

What parameter values to send

How to format and validate the request

For example, in the New York Times Books API, that simple prompt must translate into:

GET /lists/current/hardcover-fiction.json?api-key=yourkeyIf the GPT misinterprets “fiction” or fails to normalize “this week,” it could call the wrong endpoint, send malformed parameters, or fail silently.

This problem repeats across industries. A weather GPT might misread “tomorrow” or “next weekend.” A finance GPT might confuse “AAPL” with “Apple Inc.” Each integration adds complexity and risk.

As organizations deploy GPTs connected to external APIs, developers need a systematic way to test and validate how language is converted into code. They need visibility into what the GPT is doing when it speaks to an API.

The Solution: The API Test Navigator Framework

The API Test Navigator Framework was created to solve this gap. It’s a modular, schema-driven testing framework designed for developers who build GPTs that integrate with APIs.

Its purpose is to make GPT–API interactions transparent, testable, and reliable.

Instead of guessing whether a GPT interpreted a prompt correctly, developers can see every step: intent detection, entity extraction, endpoint selection, parameter normalization, and live API results.

Framework Overview

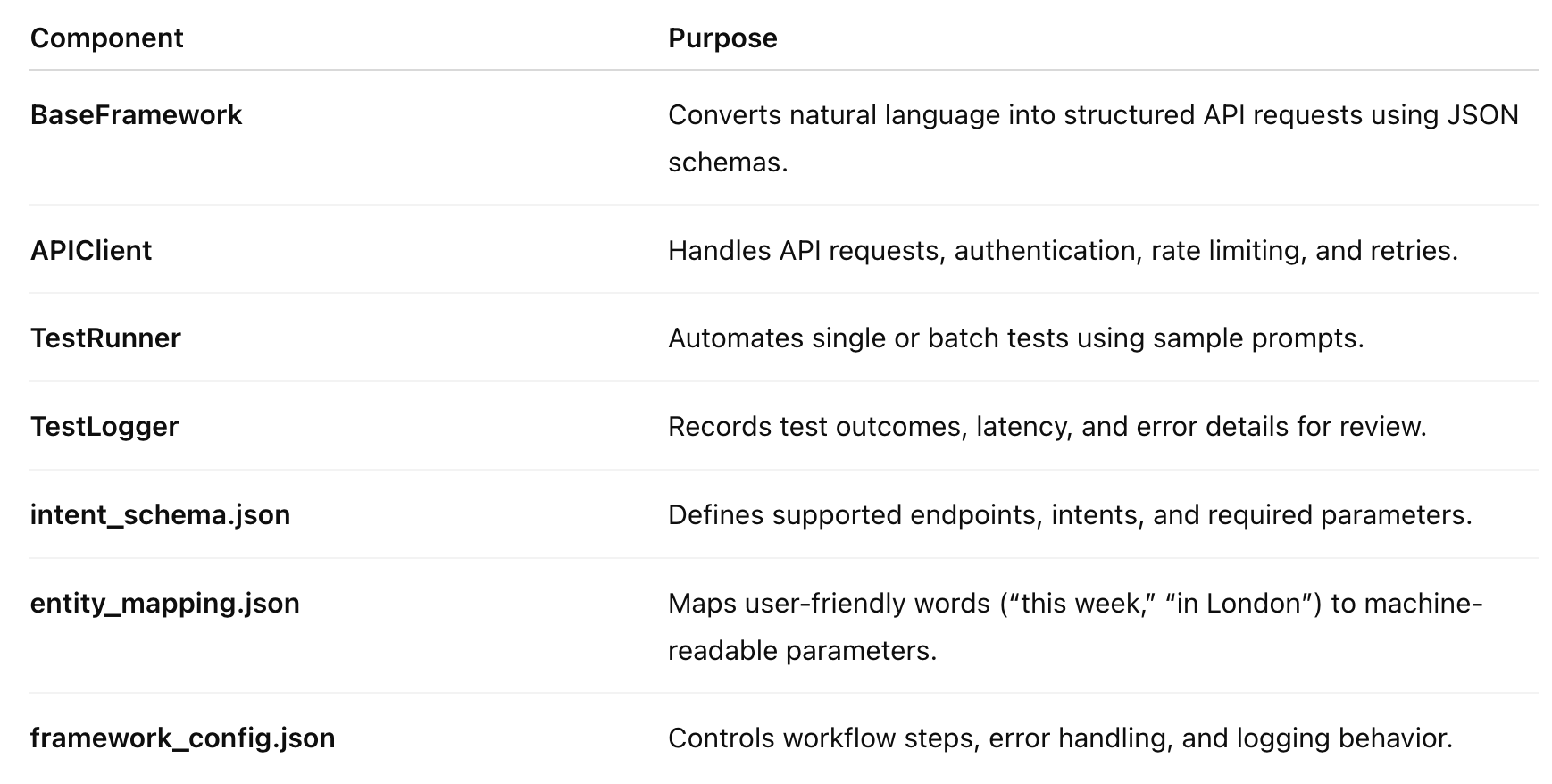

The API Test Navigator Framework includes the following core components:

Together, these components simulate or execute the full prompt → API → response pipeline for any custom GPT.

How It Works

Developer Input:

A user prompt such as “Get the NYT hardcover fiction list for May 4, 2025” is entered into the framework.Intent Detection:

The system identifies the relevant endpoint—lists_list—and extracts keywords such as “fiction” and “May 4, 2025.”Entity Mapping and Normalization:

The phrase “May 4, 2025” is converted to2025-05-04. The category “fiction” is mapped to the slughardcover-fiction.Request Construction:

The final call is built:

/lists/2025-05-04/hardcover-fiction.json?api-key=yourkeyAPI Execution:

In live mode, the framework calls the API and captures the real response. In offline mode, it simulates a response.Logging and Validation:

Results are stored, including request metadata, response time, HTTP status, and top results.

The output makes the GPT’s reasoning process fully auditable.

Who Needs This Framework

1. AI Product Engineers

Developers who design GPT-powered tools need to confirm that the system interprets user prompts correctly and interacts safely with live APIs. This framework allows them to test endpoints automatically, ensuring GPT reliability.

2. Data Integration Engineers

Engineers responsible for connecting APIs to GPTs must handle changing endpoints, API versions, and authentication keys. The framework validates that each endpoint remains functional and synchronized with its schema.

3. AI QA Engineers

Quality assurance specialists can use this framework to test natural-language variations. Instead of manually checking hundreds of phrasing differences, they can use batch testing to measure intent accuracy and parameter mapping performance.

4. MLOps and AIOps Teams

Teams managing GPT deployments in production can continuously validate API performance, log request patterns, and identify failures. The framework provides visibility into rate limits, latency, and errors.

Example: Testing a NYT Best Sellers GPT

Prompt:

“Show me this week’s NYT best sellers overview.”

Framework Output:

Intent detected: overview

Endpoint: /lists/overview.json

Parameters: { "published_date": "current" }

Full URL: https://api.nytimes.com/svc/books/v3/lists/overview.json

Live API success

Top title: THE BLACK WOLF by Louise PennyPrompt:

“Top audio nonfiction books this week.”

Framework Output:

Intent detected: lists_list

Endpoint: /lists/current/audio-nonfiction.json

Parameters: { "date": "current", "list": "audio-nonfiction" }

Top 3 books:

1. NOBODY'S GIRL by Virginia Roberts Giuffre

2. 1929 by Andrew Ross Sorkin

3. 107 DAYS by Kamala HarrisEach test verifies both the GPT’s parsing logic and the API’s response integrity.

Why This Framework Matters

1. Transparency

Developers and stakeholders can see exactly how a GPT interprets user input and constructs API requests. This eliminates uncertainty and allows for targeted debugging.

2. Reliability

Automated testing ensures that GPT–API integrations remain stable even as endpoints evolve. It identifies breaking changes before they reach users.

3. Maintainability

Because all endpoints and parameters are defined in JSON schemas, updates can be made without retraining or redeploying the GPT.

4. Standardization

This framework applies to any API domain—news, finance, weather, commerce—simply by swapping configuration files. It introduces a standardized testing methodology for all GPT–API interfaces.

A New Role: The GPT Integration Engineer

This framework also defines a new specialization within AI development: the GPT Integration Engineer.

This professional sits at the intersection of machine learning, software engineering, and systems integration.

Key Responsibilities:

Designing and maintaining schema-driven interfaces between GPTs and APIs

Running automated prompt-to-API validation tests

Monitoring response consistency and latency

Ensuring compliance and reliability across deployments

In short, the GPT Integration Engineer ensures that GPTs remain explainable, compliant, and operational at scale.

Conclusion

As custom GPTs continue to expand into live, API-driven ecosystems, the need for structured testing and validation becomes unavoidable. Traditional QA methods are not enough. The complexity of natural language interpretation requires a framework that bridges linguistic reasoning and software engineering.

The API Test Navigator Framework addresses that need directly. It transforms GPT–API integrations from opaque, unpredictable systems into transparent, testable, and maintainable components of modern software infrastructure.

It’s not just a tool—it’s a methodology for building GPTs that businesses can trust.

Summary

This framework marks a shift in how AI applications are developed and maintained. In the coming years, tools like API Test Navigator will be essential for every organization building intelligent systems that rely on live data.

The age of the testable GPT has arrived.