AI Search Models vs AI Shopping Models: A New Frontier in Search and Commerce

Introduction

Artificial intelligence is transforming how we search for information and shop for products, creating two distinct but interrelated categories of systems: AI Search Models and AI Shopping Models.

AI Search Models – such as OpenAI’s ChatGPT, Anthropic’s Claude, Perplexity’s answer engine, and Google’s Gemini – act as conversational search assistants. They are designed to tackle general knowledge queries, summarize complex topics, and serve as broad information companions.

AI Shopping Models – including Amazon’s Rufus, Walmart’s Sparky, and Target’s Bullseye – function as digital shopping assistants. Their mission is to guide customers through product discovery, recommendation, and ultimately conversion at the point of sale.

The difference lies not only in their back-end architectures but in the way users experience them. Search models are optimized for knowledge and exploration, while shopping models are optimized for personalization and decision-making. This divergence shows up across interfaces, multimodal features, navigation flows, and trust cues.

Equally important is how these systems handle data integration and personalization. Search AIs draw on vast unstructured knowledge from the web and, in some cases, user-uploaded documents. Shopping AIs connect deeply to structured product catalogs, reviews, inventory systems, and user purchase histories to make real-time, tailored recommendations. These different data strategies shape not only the answers users see but also the trust, utility, and visibility of each model.

For brands, this new landscape introduces a fresh challenge: AI visibility. Where once the priority was ranking high on Google’s search results, the goal today is ensuring that LLM-powered assistants surface your brand, product, or service when customers ask questions. And since search models and shopping models serve different purposes, brands must develop two complementary—but overlapping—playbooks. Some visibility tactics (like structured data and citations) apply to both categories, while others (like catalog optimization and retail integration) are unique to shopping.

This essay provides a comparative overview of AI Search and Shopping Models across:

Use cases and technical approaches

User experience (UX) design differences

Data integration and personalization strategies

Enterprise system integration

Implications for AI visibility engineering

We also include feature comparison tables and insights on discoverability, interpretability, and operational readiness, highlighting how brands can adapt to this evolving ecosystem.

Defining AI Search Models

AI Search Models are generative AI systems designed to retrieve and synthesize information in response to user queries, often in a conversational format. They leverage large language models (LLMs) trained on vast corpora (e.g. web text, books, code) and use advanced dialog techniques to provide direct answers, explanations, or creative content instead of a list of links. Notable examples include:

ChatGPT (OpenAI): A versatile AI chatbot known for articulate, multi-turn conversations on virtually any topic. ChatGPT can answer follow-up questions, write and debug code, translate languages, summarize text, and more. It supports text, audio, and image inputs, and OpenAI continually extends it with new features like web browsing and plugins. This broad capability has made ChatGPT one of the fastest-growing consumer apps in history, credited with accelerating the current “AI boom”.

Claude (Anthropic): An AI assistant developed by Anthropic with an emphasis on being helpful, honest, and harmless. Claude is accessible via chat interface or API and is capable of a wide range of text processing and conversational tasks. Early users found Claude highly steerable (easy to guide in tone/personality) and less likely to produce harmful outputs. It excels at summarization, Q&A, creative writing, and coding, and is designed to maintain reliability and predictability. In essence, Claude positions itself as a safer, more easily directed alternative in the AI search arena.

Perplexity AI: A conversational search engine that combines LLMs with live web search to deliver precise answers with source citations. Unlike traditional search engines that return links, Perplexity directly answers questions in natural language, citing relevant sources for transparency. This “answer engine” is free to use and real-time, retrieving up-to-date information for any query. Perplexity’s commitment to accuracy and trust is evident in features like providing referenced excerpts and multiple source links for verification. It even introduced shopping-specific integrations in late 2024 – showing product cards with images, prices, and AI-generated summaries – bridging the gap between informational search and e-commerce.

Gemini (Google DeepMind): A multimodal LLM developed by Google DeepMind, succeeding models like LaMDA and PaLM 2. Announced in late 2023 and continually improved, Gemini is positioned as Google’s answer to GPT-4-level capabilities. The Gemini family (Ultra, Pro, Flash, Nano) is designed to handle text and other modalities, exhibiting advanced reasoning and coding skills. By 2025, the latest Gemini 2.5 model led several AI benchmarks, demonstrating “thinking” abilities through chain-of-thought reasoning for complex problem solving. Google has integrated Gemini into its ecosystem – powering conversational features (the Gemini chatbot) and developer offerings on Google Cloud. In the context of search, Gemini underpins Google’s next-generation AI search experiences, aiming to keep Google Search at the cutting edge of AI-enhanced information retrieval.

Use Cases: AI Search Models primarily address information-seeking and knowledge work tasks. Users employ them to get direct answers or explanations (e.g. “What causes thunderstorms?”), to summarize or analyze documents, to generate content (writing help, coding assistance), or to explore new topics conversationally. These models shine in research and education contexts due to their ability to provide detailed, articulate responses across domains. They also support creative brainstorming, language translation, and even act as personal assistants (scheduling, email drafting) via integrations. In short, AI search models are generalists: they aim to answer any question or fulfill any prompt with the knowledge they’ve learned or by fetching information from external sources. This broad utility contrasts with AI shopping models, which focus on a more specialized domain – commerce – as we examine next.

Defining AI Shopping Models

AI Shopping Models are generative AI systems tailored for retail and e-commerce applications. Instead of open-ended knowledge, they leverage AI to assist consumers in finding and evaluating products, making purchase decisions, and even completing transactions. These models are trained or configured with extensive product data (catalogs, specs, reviews), and often augmented with real-time information like inventory, pricing, and even external content (e.g. trend data or expert articles) to answer shopping-related queries. Leading examples include:

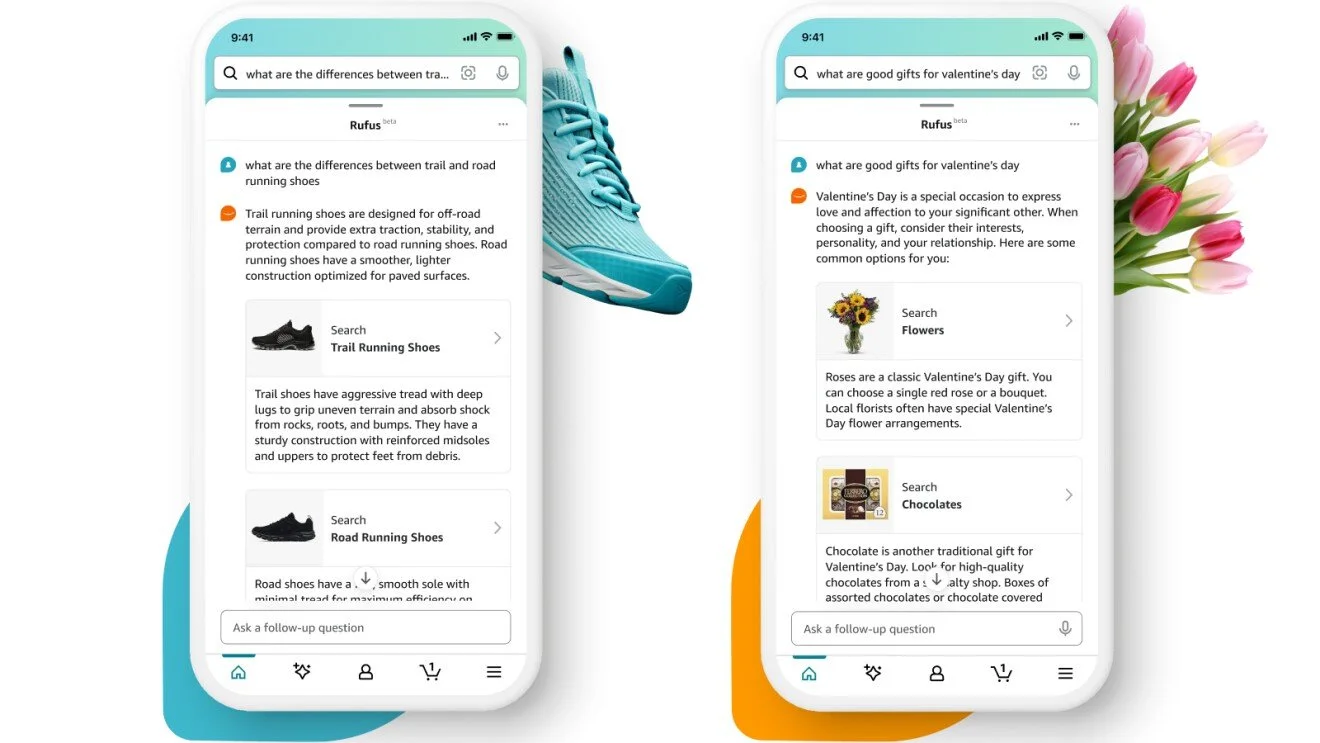

Amazon’s Rufus: A generative AI-powered conversational shopping assistant integrated into Amazon’s shopping app and website. Rufus is trained on Amazon’s vast product catalog, customer reviews, community Q&A, plus information from across the web. It can answer customer questions about products and shopping needs, provide side-by-side product comparisons, and make personalized recommendations – all within the familiar Amazon shopping experience. For example, Rufus can guide a user asking “what to consider when buying running shoes?” or “trail vs. road running shoes differences?”, and then recommend products based on that conversation. Amazon emphasizes that customers can now “shop alongside a generative AI-powered expert” that “knows Amazon’s selection inside and out”, using both Amazon data and web info to help them make informed decisions. Rufus rolled out in late 2023/early 2024 and is continually scaling; under the hood it employs a large language model with retrieval-augmented generation (RAG), pulling in relevant product information from Amazon’s search results to ground its answers.

Walmart’s Sparky: A GenAI shopping assistant introduced in 2025 via the Walmart mobile app (accessible through an “Ask Sparky” button). Sparky helps customers search for items, get product insights, and plan purchases. It can synthesize product reviews, offer occasion-based recommendations, and answer detailed questions. Uniquely, Sparky doesn’t confine itself to Walmart’s catalog – it can incorporate contextual real-world info to help recommend products. For example, a user can ask which sports teams play tonight or check the weather at an upcoming vacation spot, and Sparky will suggest relevant items (team jerseys, or a perfect outfit for the beach) based on that context. It handles product Q&A (features, differences between models, etc.) and can compare options so customers shop confidently. Walmart envisions Sparky as a “trusted partner” for shopping that will become increasingly agentic: upcoming features include automatically reordering household essentials, booking services, and supporting multi-modal inputs (text, images, audio, video) for richer interaction. Sparky is essentially a foundation for the future of retail at Walmart, unifying and enhancing capabilities like search, review summarization, and personalization under one AI umbrella.

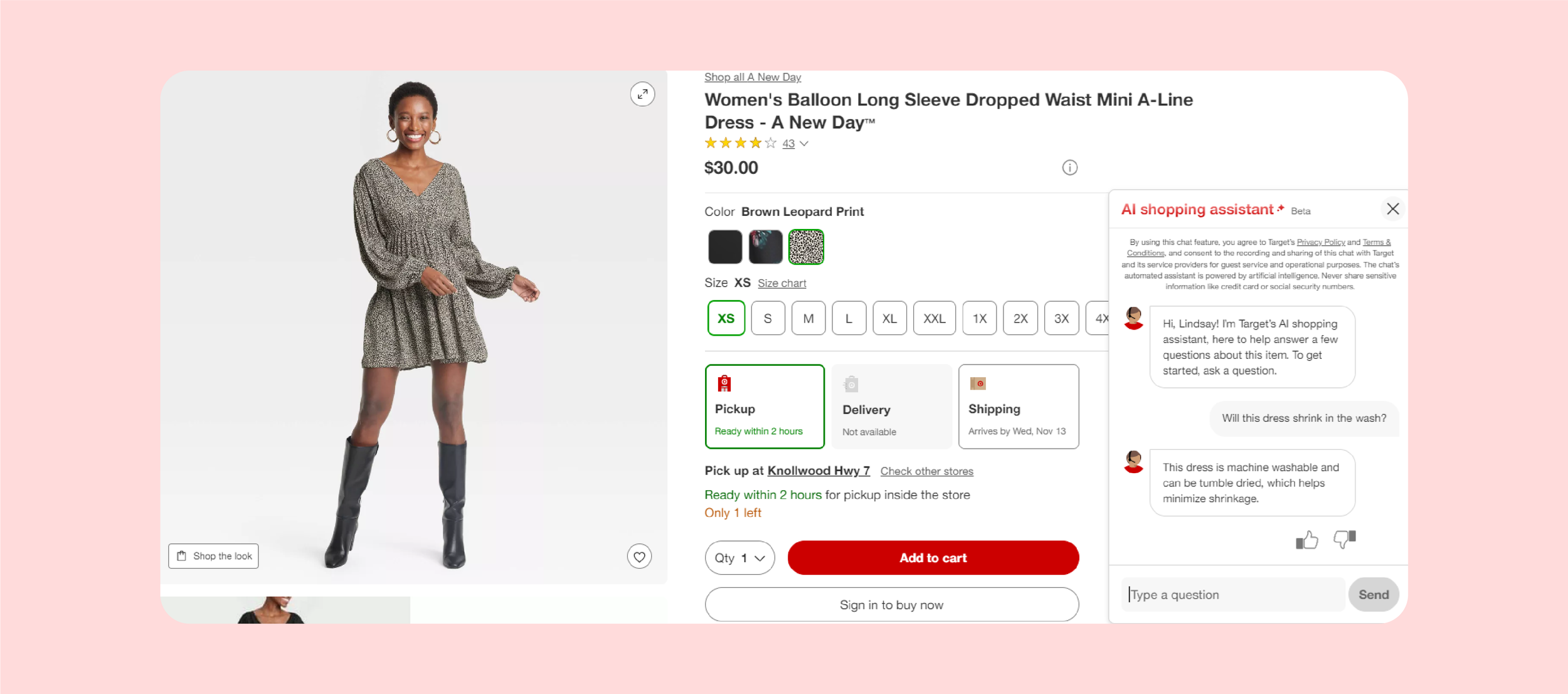

Target’s Bullseye (Gift Finder & Shopping Assistant): Target has begun experimenting with GenAI in shopping through targeted tools. In late 2024, Target launched Bullseye Gift Finder, an AI-powered tool focused on holiday toy shopping. It asks the user for criteria (age of child, interests, favorite brands, etc.) and then searches Target’s assortment to recommend the perfect gift in seconds. By pairing generative AI with Target’s product assortment, the Gift Finder can zero in on highly relevant toy suggestions, complete with product images and prices, to delight both the child and the gift-giver. Initially launched for toys, Target has been expanding it with thousands more gift ideas, indicating an evolving AI-driven recommendation engine. In addition, Target is piloting a virtual shopping assistant chat on select product pages. This chatbot appears under “About this item” for certain items and allows customers to ask detailed questions, like “Will this shirt shrink in the wash?” or “Does this lotion contain fragrance?”, receiving instant answers drawn from product data. Essentially a savvy Q&A buddy, this assistant uses generative AI to parse product descriptions, specs, and even reviews to answer specific queries in real time. These efforts at Target show a more focused deployment of AI (specific use-cases like gift-finding and product Q&A), but they underscore the trend: even outside the tech giants, retailers are leveraging AI to enhance the shopping experience.

Exploring the User Experience

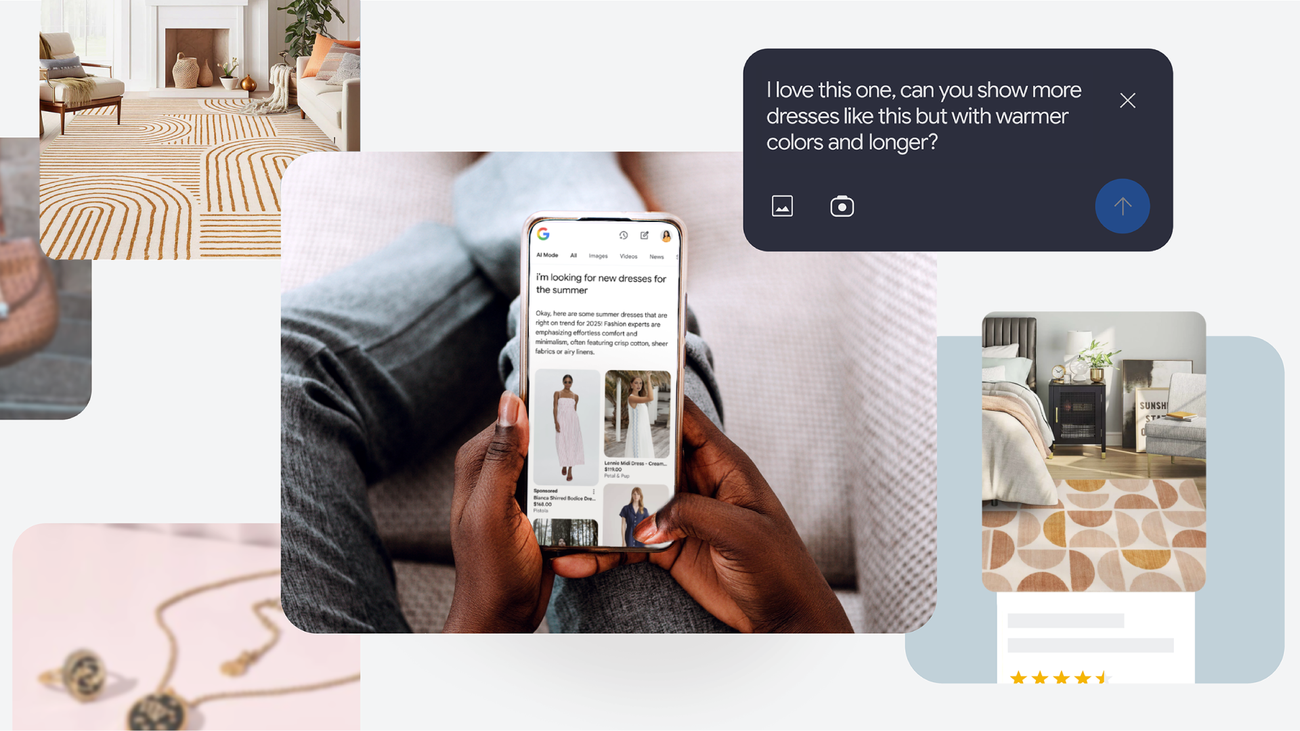

As AI systems become deeply integrated into consumer experiences, their user experience design (UX) is emerging as a critical differentiator. While AI search models like ChatGPT, Claude, Gemini, and Perplexity are built to provide knowledge and context, AI shopping models like Amazon Rufus, Walmart Sparky, and Target Bullseye are designed to facilitate decisions and conversions.

The difference lies not only in their back-end models but in the way users experience them. Let’s unpack the UX contrasts across interfaces, multimodality, personalization, navigation, and trust—and why they matter for AI visibility.

1. Interface & Presentation

AI Search Models: Typically presented as chat interfaces (ChatGPT, Claude) or answer-first search results pages (Perplexity). Minimal visual elements; answers are textual summaries with citations and follow-up queries.

AI Shopping Models: Embedded directly inside shopping apps and sites. Conversations co-exist with product images, prices, ratings, and links to purchase.

Figure 1: Amazon Rufus UI example – a shopper asks “What’s the difference between trail and road running shoes?” Rufus explains, then suggests relevant categories with product images and links.

2. Visual and Multimodal Elements

Search UX: Primarily text. Multimodal input (e.g., images in Gemini) is still emerging. Visuals like maps, photos, or charts are pulled from search indices, not generated in real-time.

Shopping UX: Rich, visual-first. Answers often embed product thumbnails, prices, and descriptions inline. Conversational answers explain why items are recommended.

3. Context and Personalization

Search UX: Generally session-based personalization. Google or Perplexity may factor in prior queries, but the assistant rarely references your purchase history or profile.

Shopping UX: Context is explicit and persistent.

📌 Figure 2: Target’s Shopping Assistant (beta) on a product page – user asks, “Will this dress shrink in the wash?” Assistant pulls care info from product details and answers in context.

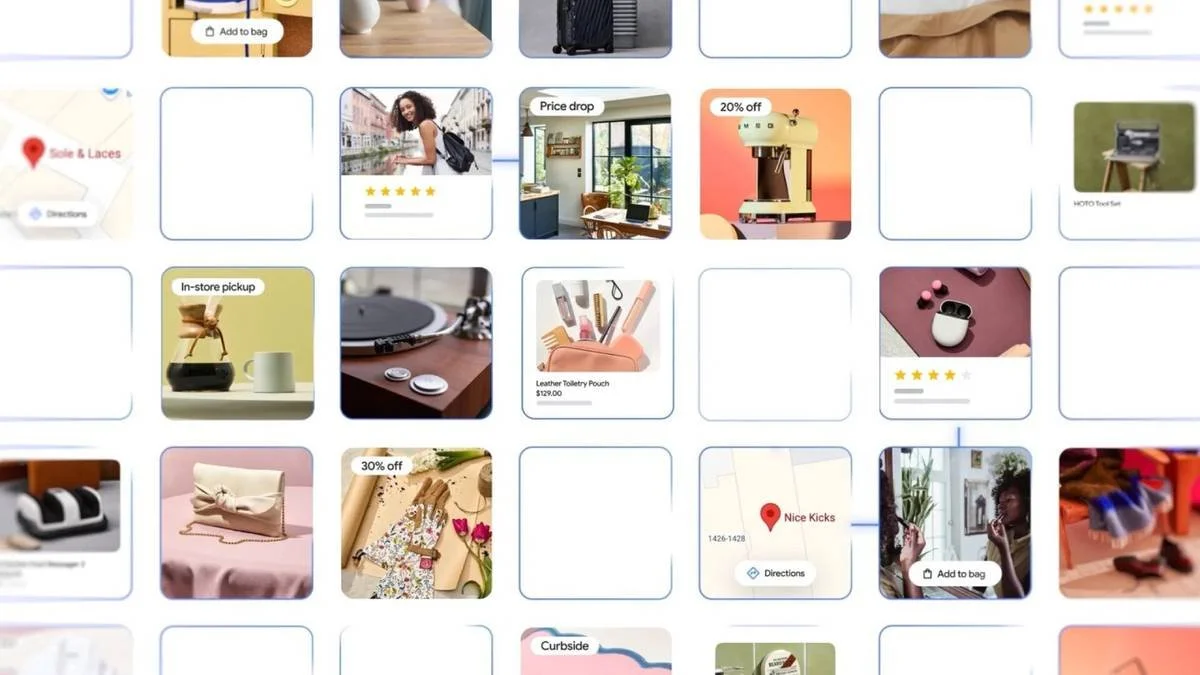

4. Navigational Aids and Conversion Paths

AI Search Models: Designed for exploration. Encourage follow-up questions, related topics, or deeper reading. Success = engagement or click-through.

AI Shopping Models: Designed for conversion. Responses frequently include “Add to cart” buttons, filters, or checkboxes embedded in chat.

5. User Guidance and Trust Cues

Search AI: Often labeled as “experimental” or “AI-generated” to educate users. Google’s AI Overviews highlight answer segments tied to sources.

Shopping AI: Transparency + branding are key.

These cues reassure shoppers that the AI is safe, helpful, and reliable—essential for building adoption in retail.

Competitive UX Analysis

Exploring the Data and Personalisation Differences

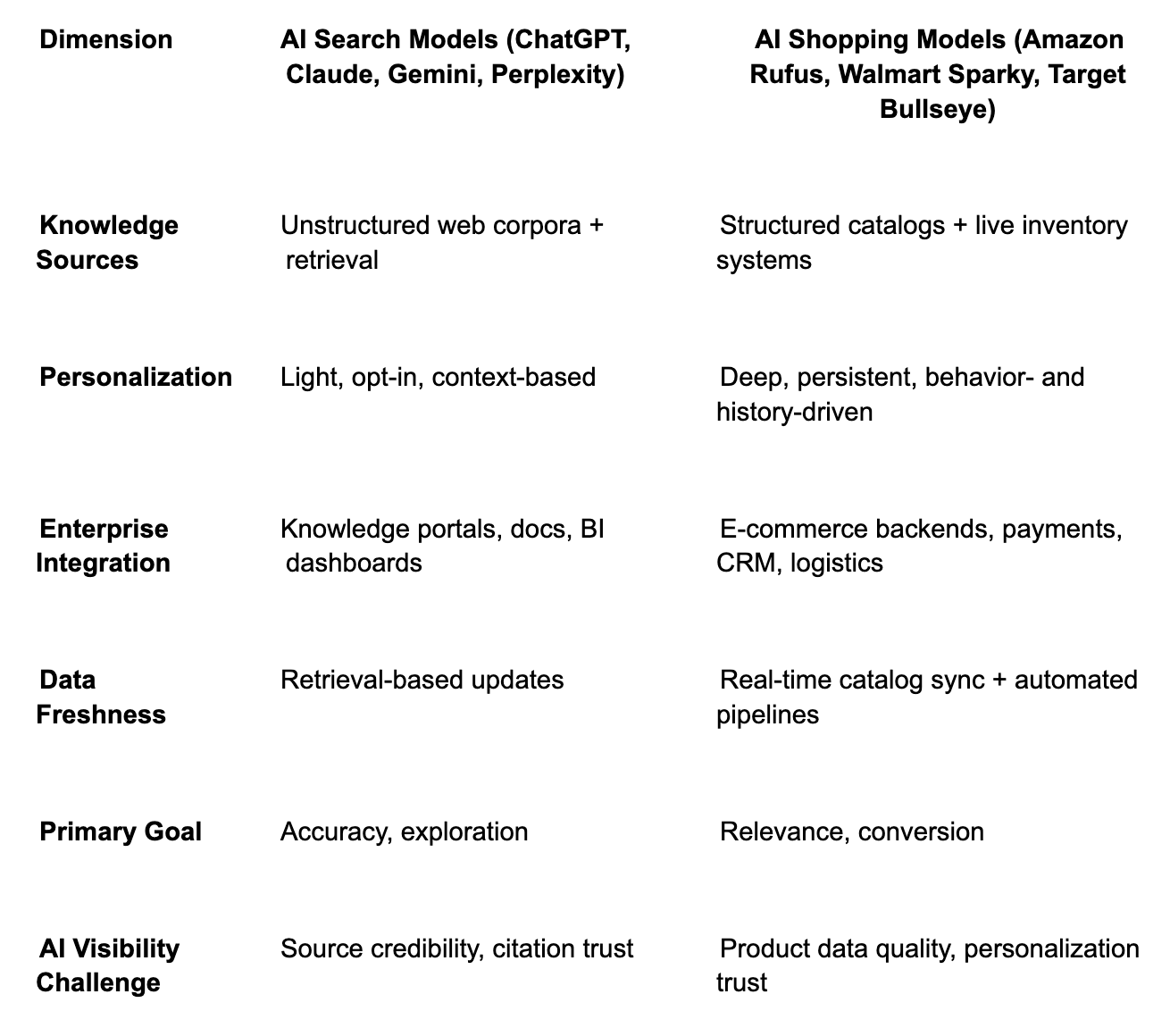

One of the most critical aspects of modern AI systems is how they handle data—both the external data they draw upon to generate answers and the user data that can tailor those answers. While both AI search models (ChatGPT, Claude, Perplexity, Gemini) and AI shopping models (Amazon Rufus, Walmart Sparky, Target Bullseye) leverage advanced large language models (LLMs), their data integration strategies and personalization techniques diverge sharply. These differences shape not only the answers users receive but also the trust, utility, and visibility of the AI systems.

1. Data Sources and Knowledge Integration

AI Search Models

AI Shopping Models

📌 Key Difference: Search AI = breadth from the open web; Shopping AI = depth from structured retail data sources.

This diagram illustrates the contrasting data pipelines of AI Search Models and AI Shopping Models. On the left, AI search systems such as ChatGPT, Claude, Perplexity, and Gemini follow a relatively streamlined flow: a user query is processed by a pretrained LLM, optionally augmented by a retrieval layer pulling in fresh web data or documents, before generating a text-based answer with citations. On the right, AI shopping assistants like Amazon Rufus, Walmart Sparky, and Target Bullseye require a more complex, commerce-specific integration. After the query is interpreted by a domain-tuned LLM, the system taps into structured retail databases—product catalogs, inventory systems, reviews, and supplier specs—then layers on personalization using purchase history, cart contents, and loyalty data. The final output is not just an answer but a shoppable experience, blending chat, product images, delivery timelines, and add-to-cart options. This side-by-side view highlights how search AI is optimized for exploration and information, while shopping AI is built for conversion and personalization.

2. Personalization Techniques

AI Search Models

AI Shopping Models

📌 Key Difference: Search AI personalizes lightly; Shopping AI personalizes heavily, with direct impact on recommendations and conversions.

3. Integration with Enterprise Systems

AI Search Models

AI Shopping Models

📌 Key Difference: Search AI integrates into knowledge ecosystems; Shopping AI integrates into commerce ecosystems.

4. Data Freshness and Maintenance

AI Search Models

AI Shopping Models

📌 Key Difference: Search = “evergreen knowledge + fresh retrieval”; Shopping = “real-time inventory + dynamic personalization.”

Comparative Scoring Table

The contrast is clear:

AI Search Models integrate broad unstructured knowledge with light personalization, excelling at exploration and general Q&A.

AI Shopping Models integrate structured retail data and live inventory with deep personalization, excelling at product discovery and conversion.

For an AI Visibility Engineer, these differences are crucial. Visibility isn’t just about whether your brand appears in AI answers—it’s about ensuring:

Your structured data is optimized and AI-ready.

Your brand and product signals are discoverable, fresh, and accurate.

Your personalization strategies feel helpful, not intrusive.

In the age of AI-powered retail, data isn’t just the fuel for AI—it’s the UX itself.

The AI Visibility Playbook: Search vs. Shopping Models

AI visibility has become a new frontier for brands. Where once the goal was to rank high on Google’s results page, today the challenge is to ensure that large language models (LLMs) and AI-powered assistants surface your brand, product, or service when customers ask questions. Yet not all AI systems are created equal. AI Search Models (e.g., ChatGPT, Claude, Gemini, Perplexity) are designed to provide answers, while AI Shopping Models (e.g., Amazon Rufus, Walmart Sparky, Target Bullseye) are designed to drive purchases.

For brands, this means two different—but overlapping—playbooks. Some tactics apply broadly across both categories, while others are unique to the distinct data pipelines, personalization strategies, and user experiences of search vs. shopping AI.

The Brand Visibility Challenge Across AI Systems

Before diving into the playbooks, it’s helpful to recap how these models operate:

AI Search Models draw from vast unstructured data (Wikipedia, news sites, forums, academic research). They integrate knowledge through pretraining and retrieval (web search APIs, uploaded docs). Visibility depends on citations, mentions, and structured knowledge graphs that LLMs can access.

AI Shopping Models ingest structured product catalogs (names, prices, reviews, stock levels) and connect directly to retailer ecosystems. They personalize outputs based on user purchase history, loyalty data, and context. Visibility here depends on catalog quality, schema markup, and integration with retailer platforms.

The Overlapping Tactics

Some tactics improve visibility across both search and shopping models:

Structured Data & Schema

Citations & Mentions

Conversational Content

Reputation & Reviews

The Unique Playbook for AI Search Models

Brands aiming to increase their presence in AI search assistants should focus on:

Citation-Heavy Content Strategy

Knowledge Graph Integration

Hallucination Testing

FAQ & Evergreen Content

📌 Success Metric: Does the brand appear in AI answers with citations, accurate context, and trusted references?

The Unique Playbook for AI Shopping Models

Brands wanting to dominate in AI shopping assistants must go deeper into retail integration:

Catalog Optimization

Retailer Data Feeds

Attribution & Conversion Tracking

Multimodal Assets

Personalization Hooks

📌 Success Metric: Does the brand appear in AI-powered product recommendations, and do those mentions translate into cart additions and conversions?

Comparative Playbook Table

While AI Search Models and AI Shopping Models share some overlapping visibility tactics—structured data, citations, conversational content—their core strategies diverge.

Search AI Visibility is about being cited, trusted, and accurate in broad knowledge domains.

Shopping AI Visibility is about being integrated, complete, and personalized in retail ecosystems.

For brands, this means developing two complementary playbooks:

One that ensures your brand is part of the knowledge fabric of the web (search).

Another that ensures your products are discoverable, well-described, and conversion-ready in retailer ecosystems (shopping).

The most advanced visibility strategies recognize the overlap but double down on the unique requirements of each AI system. In the age of LLM-powered discovery, visibility equals viability—and the brands that invest early in both search and shopping AI playbooks will lead in consumer mindshare and market share.

Conclusion

The advent of AI Search Models and AI Shopping Models marks a new era where information discovery and product discovery increasingly converge through natural language. Yet their missions remain distinct:

Search AI is designed to satisfy curiosity, surface broad knowledge, and guide exploration.

Shopping AI is designed to close the loop, blending conversation with commerce and driving conversion.

From a user experience perspective, search models excel at providing accurate, context-rich answers, while shopping models integrate structured data, reviews, and personalization to mimic the role of a personal shopper. From a data integration standpoint, search models draw on vast, unstructured web sources with light personalization, whereas shopping models connect to live retail ecosystems—product catalogs, inventory, loyalty profiles—with deep personalization at their core.

For AI visibility engineers, these differences are crucial. Visibility is no longer just about being “present” in a search index—it’s about ensuring that:

Structured data is optimized and AI-ready.

Brand and product signals are discoverable, fresh, and accurate.

Personalization strategies feel helpful, not intrusive.

The playbooks diverge:

Search AI visibility is about being cited, trusted, and accurate in knowledge domains.

Shopping AI visibility is about being integrated, complete, and conversion-ready in retail ecosystems.

Both, however, push us to rethink UX design, data strategy, and system integration. The most advanced strategies recognize the overlap—structured content, citations, conversational FAQs—but also double down on what makes each system unique.

Ultimately, organizations that master the interplay between search AI and shopping AI—while keeping a keen focus on discoverability, interpretability, and user trust—will lead the way. In the age of LLM-powered discovery, visibility equals viability. The companies that design not just better models but better experiences will transform cool demos into trusted, game-changing tools, setting the standard for the next decade of AI-driven search and commerce.